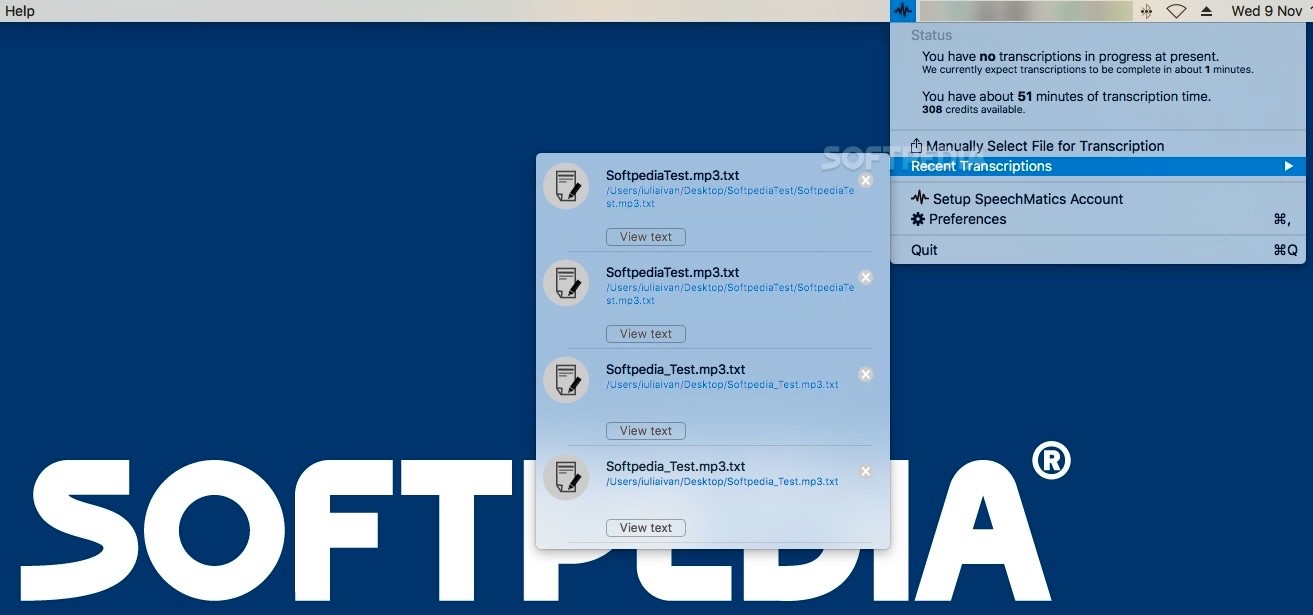

You'll need to pre-define a custom dictionary in Team Settings, and you'll need to can choose it here, in order to submit it for transcription. This is a feature that biases the speech recognition towards a pre-defined list of words – like names, places, products and custom terminology. Global English is an excellent general purpose model, and it's especially good when the speaker is not a native English speaker.ĭepending on your subscription, you may have custom dictionaries enabled. Speechmatics supports the languages below. This speaker detection will be applied to the caption metadata, which you'll be able to access and edit on the Edit page. To use this feature, simply enable the checkbox. You'll need to choose the language that your video is in.ĭepending on your subscription, you may have speaker detection enabled. It allows you to send media files for transcription directly. To use Auto transcribe, select it in the "Create captions" dialogue box, or from the "Replace captions" dialogue box. NVivo Transcription is an automated, cloud-based transcription service integrated into NVivo 12. To change speech recognition provider, navigate to Team Settings, and change providers from the Transcription sub-menu. Our AutoTranscribe models are pushing the highest level of development in neural end-to-end ASR incorporating ASAPP-driven. Both have slightly different functionality, which is supported in CaptionHub. AutoTranscribe is designed to operate in a complex, noisy contact center environment at millisecond speeds with the highest real-time transcription accuracy in the industry, said ASAPP Chief Scientist Ryan McDonald.

We use two different speech recognition providers: Speechmatics, and Amazon Transcribe. Using CaptionHub's proprietary Natural Captions© technology, we'll then process that transcript into perfectly aligned, human readable captions. Clicking on it will send an audio file to a speech recognition provider, and they'll return a raw transcript to us. The resulting transcription text file is stored onto the content node as the transcription attachment.įor more information, please see our formal documentation on how Cloud CMS works with Transcription.Auto transcribe is our most popular option for creating captions. ASAPP the AI Cloud company has announced availability of AutoTranscribe and claims it to be the most accurate, real-time, speech-to-text transcription. The name of the source attachment containing the source audio. AI and human transcription with industry-leading accuracy, live collaboration, search, and speaker identification. Select your preferred language and click Start. The `_doc` identifier of the Transcription Service configuration to use. Trints AI transcription quickly converts your audio & video files to text, making them as editable, searchable and collaborative as a doc. From the left menu, click on Subtitles then select Auto Transcribe. "description": "Indicates that a node with an attachment should have a transcription produced automatically", This allows different feature configurations to use different services if that is your preference. The service can either be configured as the default Transcription Service for your Project or it can be referenced from the f:auto-transcribe feature. Our Site, autotranscribe.io, is owned and operated by Spicer Solutions of 3 Lorre Mews, Oxley Park, Milton Keynes, Buckinghamshire, MK4 4GY. To use this service, you will first need to set up a Transcription Service. The generated text will be stored back to an attachment named transcription (as a text/plain file).

With this feature in place, a content instance will automatically have an audio attachment converted to text using speech-to-text conversion. This section describes features that are coming in 4.0 Otter voice notes how to auto-transcribe meetingsOtter voice notesHow to auto-transcribe meetings.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed